Notations

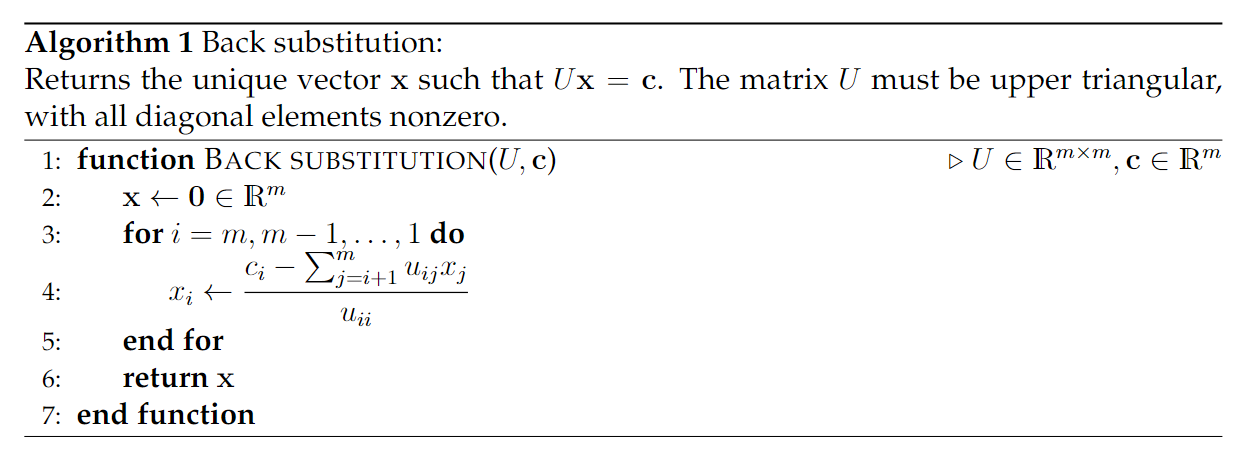

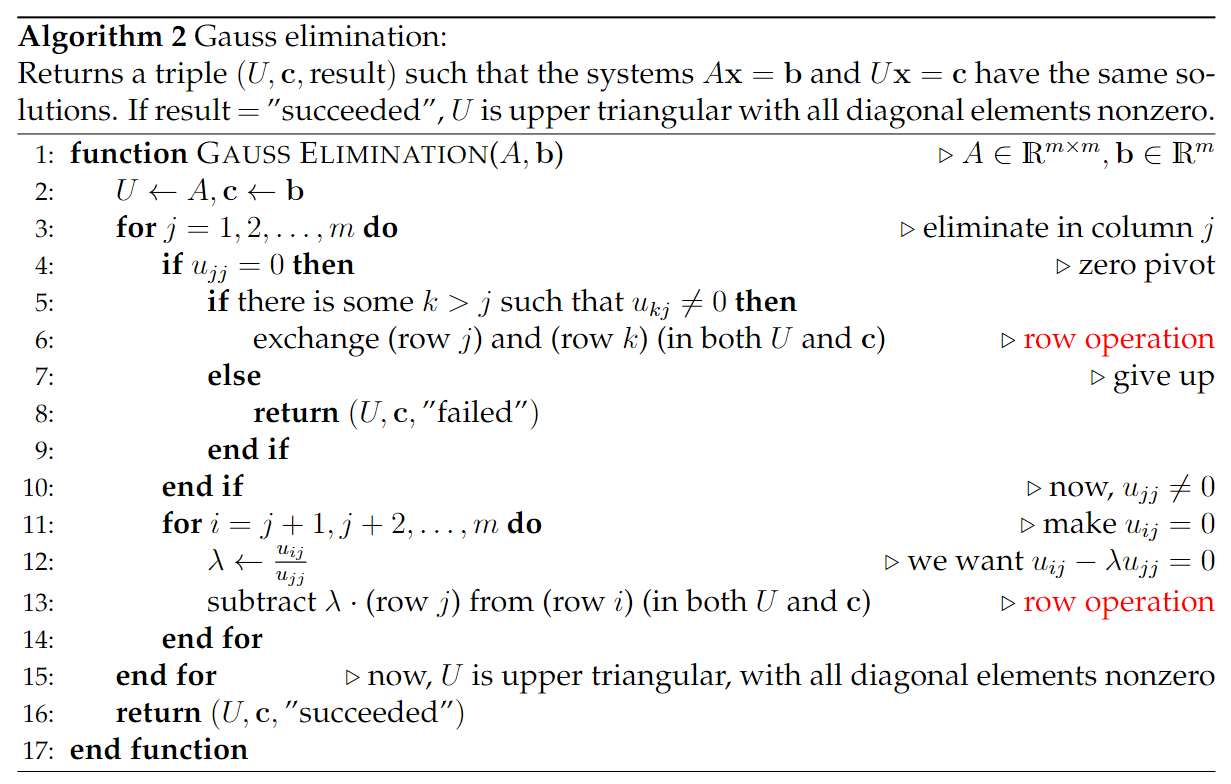

Method: Gauss elimination

Gaus elimination succeeds The columns of are linearly independent

Method: Gauss-Jordan Elimination

All solutions of

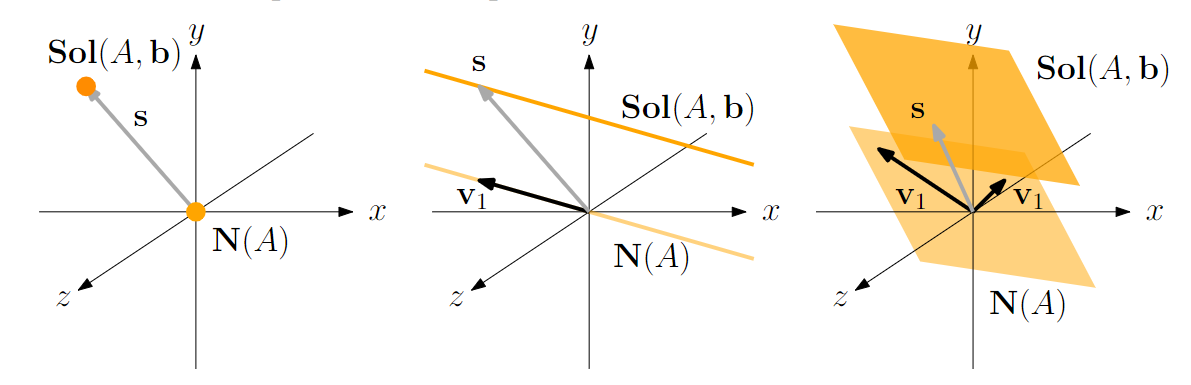

Definition: The solution space

It’s obvious that (see How to compute null space)

and is just a shifted null space

visualization of a shifted null space

Thus,

Thus,

To solve , we just compute one solution using Gauss-Jordan Elimination and a basis from . Then

Generally, a shifted copy of a subspace in a vector space is called an affine subspace

Theorem 6.2.2

Suppose is not empty where is unique such that

Now we know that we can find a unique vector that can represent The vector is orthogonal to the (the shortest position vector)

- Proof

Edge case

Question

What if is empty?

Let Consider the equation is linear independent from The equation has a solution if

Let , Proof: and can have a same solution and

Proof: with This means Let

Application: Row Independent Equation Systems

If and the rows are linear independent: is solvable for all Proof:

Application: Projection System

is always solvable for all Proof: (See Lemma 5.1.10)

Dimension of the solution space

If has a solution, then has dimension , since

Lemma 6.2.1

**Proof**: $x \in C(A^{\top}), y\in C(A^{\top}) \implies x-y\in C(A^{\top})$ $Ax=Ay\implies A(x-y)=0\implies x-y\in N(A)\implies x-y \in C(A^{\top})^{\perp}$ $(x-y\in C(A^{\top}))\wedge (x-y\in C(A^{\top})^{\perp})\implies x-y=0\implies x=y$ ## Certificate $A\in \mathbb{R}^{m\times n},b\in \mathbb{R}^{m},A=\begin{bmatrix}a_{1}^{\top} \\ a_{2}^{\top} \\ \vdots \\ a^{\top}_{m}\end{bmatrix}$ $a_{i}^{\top}\in \mathbb{R}^{n}$, consider $P=\{ x \in \mathbb{R}^{n}|a_{i}^{\top}x\leq b_{i}, \;i \in[m]\}=\{ x \in \mathbb{R}^{n}|Ax\leq b\}$ ### Theorem: Farkas $P=\{ x \in \mathbb{R}^{n}|Ax\leq b\}=\varnothing\Longleftrightarrow \{ z\in \mathbb{R}^{m}|A^{\top}z=0,b^{\top}z=-1,z\geq0 \}$ OR formally ![[Pasted image 20251114115652.png]]Let . Let . We have$$ Ax=Ay\Longleftrightarrow x=y